Periodically we do tests of various AI services to see if we should be using something on the backend of Transcriptive-A.I. We’re more interested in having the most accurate A.I. than we are with sticking with a particular service (or trying to develop our own). The different services have different costs, which is why Transcriptive Premium costs a bit more. Gives us more flexibility in deciding which service to use.

This latest test will give you a good sense of how the different services compare, particularly in relation to Adobe’s transcription AI that’s built into Premiere.

The Tests

Short Analysis (i.e. TL;DR):

For well recorded audio, all the A.I. services are excellent. There isn’t a lot of difference between the best and worst A.I… maybe one or two words per hundred words. There is a BIG drop off as audio quality gets worse and you can really see this with Adobe’s service and the regular Transcriptive-A.I. service.

A 2% difference in accuracy is not a big deal. As you start getting up around 6-7% and higher, the additional time it takes to fix errors in the transcript starts to become really significant. Every additonal 1% in accuracy means 3.5 minutes less of clean up time (for a 30 minute clip). So small improvements in accuracy can make a big difference if you (or your Assistant Editor) needs to clean up a long transcript.

So when you see an 8% difference between Adobe and Transcriptive Premium, realize it’s going to take you about 25-30 minutes longer to clean up a 30 minute Adobe transcript.

Takeaway: For high quality audio, you can use any of the services… Adobe’s free service or the .04/min TS-AI service. For audio of medium to poor quality, you’ll save yourself a lot of time by using Transcriptive-Premium. (Getting Adobe transcripts into Transcriptive requires a couple hoops to jump through, Adobe didn’t make it as easy as they could’ve, but it’s not hard. Here’s how to import Adobe transcripts into Transcriptive)

(For more info on how we test, see this blog post on testing AI accuracy)

Long Analysis

When we do these tests, we look at two graphs:

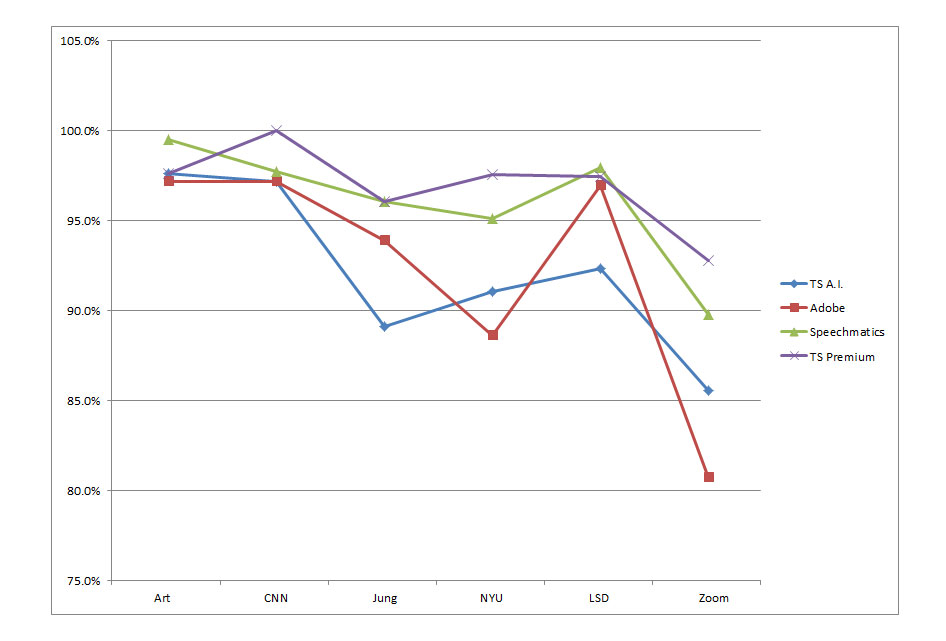

- How each A.I. performed for specific clips

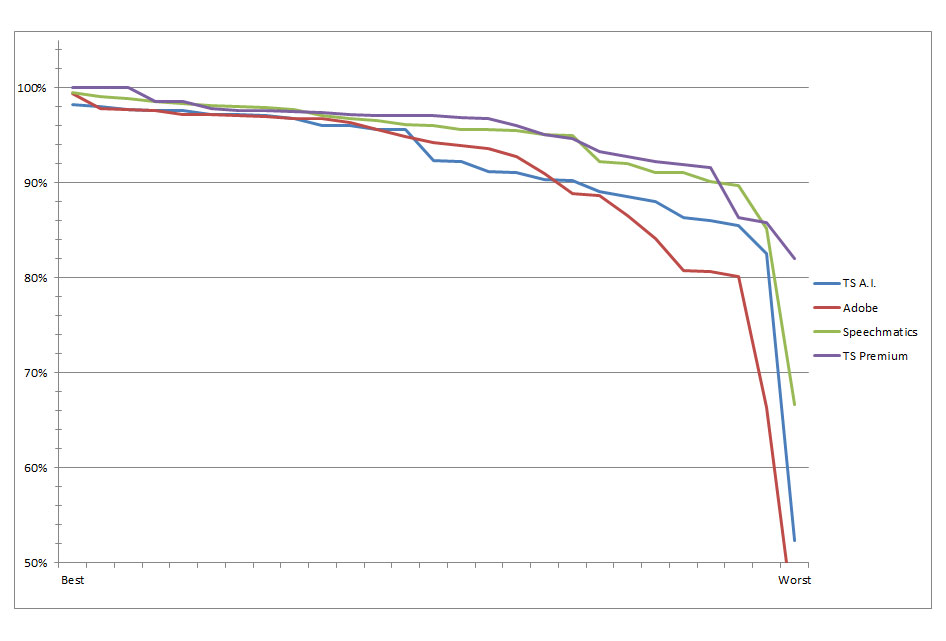

- The accuracy curve for each A.I. which shows how it did from its Best result to Worst result.

The important thing to realize when looking at the Accuracy Curves (#2 above) is that the corresponding points on each curve are usually different clips. The best clip for one A.I. may not have been the best clip for a different A.I. I find this Overall Accuracy Curve (OAC) to be more informative than the ‘clip-by-clip’ graph. A given A.I. may do particularly well or poorly on a single clip, but the OAC smooths the variation out and you get a better representation of overall performance.

Take a look at the charts for this test (the audio files used are available at the bottom of this post):

All of the A.I. services will fall off a cliff, accuracy-wise, as the audio quality degrades. Any result lower than about 90% accuracy is probably going to be better done by a human. Certainly anything below 80%. At 80% it will very likely take more time to clean up the transcript than to just do it manually from scratch.

The two things I look for in the curve is where does it break below 95% and where does it break below 90%. And, of course, how that compares to the other curves. The longer the curve stays above those percentages, the more audio degradation a given A.I. can deal with.

You’re probably thinking, well, that’s just six clips! True, but if you choose six clips with a good range of quality, from great to poor, then the curve will be roughly the same even if you had more clips. Here’s the full test with about 30 clips:

While the curves look a little different (the regular TS A.I. looks better in this graph), mostly it follows the pattern of the six clip OAC. And the ‘cliffs’ become more apparent… Where a given level of audio causes AI performance to drop to a lower tier. Most of the AIs will stay at a certain accuracy for a while, then drop down, hold there for a bit, drop down again, etc. until the audio degrades so much that the AI basically fails.

Here are the actual test results:

| TS A.I. | Adobe | Speechmatics | TS Premium | |

| Interview | 97.2% | 97.2% | 97.8% | 100.0% |

| Art | 97.6% | 97.2% | 99.5% | 97.6% |

| NYU | 91.1% | 88.6% | 95.1% | 97.6% |

| LSD | 92.3% | 96.9% | 98.0% | 97.4% |

| Jung | 89.1% | 93.9% | 96.1% | 96.1% |

| Zoom | 85.5% | 80.7% | 89.8% | 92.8% |

So that’s the basics of testing different A.I.s! Here are the clips we used for the smaller test to give you an idea of what’s meant by ‘High Quality’ or ‘Poor Quality’. The more jargon, background noise, accents, soft speaking, etc there is in a clip, the harder it’ll be for the A.I. to produce good results. And you can hear that below. You’ll notice that all the clips are 1 to 1.5 minutes long. We’ve found that as long as the clip is representative of the whole clip it’s taken from, you don’t get any additional info from the whole clip. An hour long clip will product similar results to one minute, as long as that one minute has the same speakers, jargon, background noise, etc.

Any questions or feedback, please leave a note in the Comments section! (or email us at cs@nulldigitalanarchy.com)